Table of Contents

What Is Load Balancing?

Load balancing is the process of distributing incoming requests across multiple servers within a server farm or pool. Instead of a single machine bearing the entire workload, requests are intelligently directed to the most available and capable resources in the server pool. This prevents individual web servers from becoming bottlenecks, helping maintain optimal throughput and minimize response times.

By decoupling traffic from any single server, load balancing enables horizontal scalability. As demands grow, organizations can manage thousands of concurrent requests by adding additional Points of Presence (PoPs).

How Does Load Balancing Work?

Load balancing operates through continuous evaluation of server availability, capacity, and response behavior. Traffic routing decisions are guided by health checks and performance signals to route requests to the most capable resources. If a server becomes unresponsive or overloaded, traffic is redirected automatically to maintain service continuity.

At the core of this process are load balancers, which serve as the control layer responsible for making and enforcing these routing decisions. Deployed as dedicated appliances or software-based controllers, load balancers monitor backend resources and apply distribution logic in real time.

In many environments, the Application Delivery Controller (ADC) extends this functionality by executing load balancing policies at scale and responding to changing network conditions. While the decision logic remains consistent, load balancers differ in how and where they operate, with distinct types optimized for specific layers and deployment scenarios.

Types of Load Balancers

Load balancers can be categorized into several types based on their operating layer, geographic scope, and deployment model:

Network Load Balancer

Operating at the transport layer, network load balancers route traffic based on IP address and TCP/UDP ports. Without inspecting packet content, they achieve high throughput and minimal latency, making them ideal for managing massive volumes when speed is paramount and deep packet inspection is unnecessary.

Application Load Balancer

Working at the application layer, L7 balancers evaluate HTTP headers, SSL session IDs, and user requests. This context-aware approach allows for highly granular traffic steering, such as directing image requests to a specialized media server while routing API calls to a different pool.

Cloud Load Balancer

Cloud load balancers distribute traffic through managed virtual instances that scale automatically with demand within cloud platforms. They offer flexible resource distribution without manual hardware configuration.

Global Server Load Balancing (GSLB)

GSLB extends traffic management across multiple geographic regions. It utilizes distributed server farms to direct users to the nearest data center, reducing latency and improving user experience. Global orchestration also provides failover mechanisms: if a region experiences an outage, traffic is automatically rerouted to healthy sites worldwide.

Hardware vs. Software Load Balancing

Load balancing can be implemented via hardware-based appliances or software-defined solutions. Hardware load balancers are dedicated physical devices installed on premises to handle high-performance traffic. While proprietary appliances offer massive throughput and specialized processing power, they involve significant upfront costs and manual maintenance.

Software load balancers run on standard servers or within virtualized environments. Software-defined controllers offer the same core benefits as physical hardware with greater scalability and lower overhead. Organizations can rapidly adjust capacity and deploy security updates without physical hardware constraints.

Load Balancing Benefits

Load balancing delivers consistent operational benefits across deployment models, particularly in environments with fluctuating traffic and strict uptime expectations.

Scalability for Traffic Spikes

Traffic spikes can quickly overwhelm servers during high-demand periods such as holiday seasons or promotional events. Under these conditions, load balancing enables organizations to dynamically adjust server capacity and distribute workloads, keeping applications responsive as demand rises. For e-commerce platforms, scalable traffic handling directly affects revenue outcomes, since stable performance determines whether customer demand converts into completed purchases or churn.

Redundancy and Downtime Prevention

Traffic surges increase the risk of server failure by concentrating demand on limited infrastructure. Load balancing reduces this risk by distributing applications across multiple web servers, preventing single points of failure from disrupting service availability. When one server or point of presence becomes unavailable, traffic can be automatically redirected to a functioning location, allowing services to continue without interruption. Active-passive architectures strengthen redundancy by enabling reliable failovers during hardware or software malfunctions. Within this framework, CDNetworks’ Origin Load Balancing supports enterprise deployments by monitoring PoP health and shifts traffic as needed to maintain stability.

Flexibility For Maintenance

Routine maintenance often disrupts services when production of traffic remains tied to a limited set of active servers. Load balancing improves operational flexibility by allowing user traffic to be diverted to passive servers during maintenance windows. Through configuration controls, IT teams can route active traffic to designated servers while updates and security patches are applied elsewhere. Maintenance tasks can proceed on idle servers while changes are tested in a live environment. After validation, the load balancer restores the updated server to active status, allowing maintenance activities to complete without requiring a full-service shutdown.

Proactive Failure Detection and Performance Optimization

Managing traffic across multiple data centers requires early awareness of infrastructure failures. Once failures are isolated, routing decisions can focus on performance optimization, which becomes critical in distributed PoP environments. Load balancing supports this requirement by identifying server outages and redirecting traffic away from affected locations, allowing services to remain available.

The same routing logic also improves performance. Region-aware origin selection reduces latency by keeping requests within nearby infrastructure and avoiding unnecessary cross-region transfers. Faster responses help maintain user experience while issues are resolved in the background.

DDoS Attack Mitigation

Distributed Denial of Service (DDoS) attacks overwhelm infrastructure by flooding a single-entry point with excessive traffic. In such scenarios, relying on a single server significantly increases the risk of service disruption. Load balancing mitigates this risk by distributing incoming traffic across multiple servers, preventing any one system from becoming a bottleneck. When attack traffic targets a specific server, traffic can be rerouted to available resources, reducing the exposed attack surface. As a result, services remain accessible, and the network becomes more resilient to sustained attack attempts.

Common Load Balancing Algorithms

Load balancing algorithms define how incoming traffic is routed to backend servers. Different decision models address distinct operational needs, influencing stability, performance, and resource utilization under load.

Round Robin

Round Robin distributes incoming requests sequentially across available servers. Each request is forwarded to the next server in the cycle, returning to the first server after the last server is reached. Round Robin is simple and easy to implement, making it suitable for environments where servers have similar capacity and performance characteristics. However, Round Robin assumes uniform workloads and does not account for real-time server loads, which can lead to imbalance when traffic patterns fluctuate.

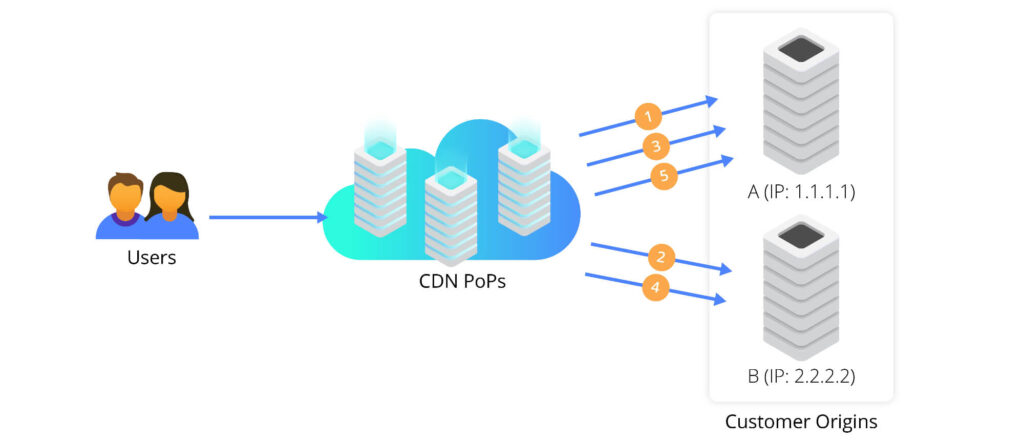

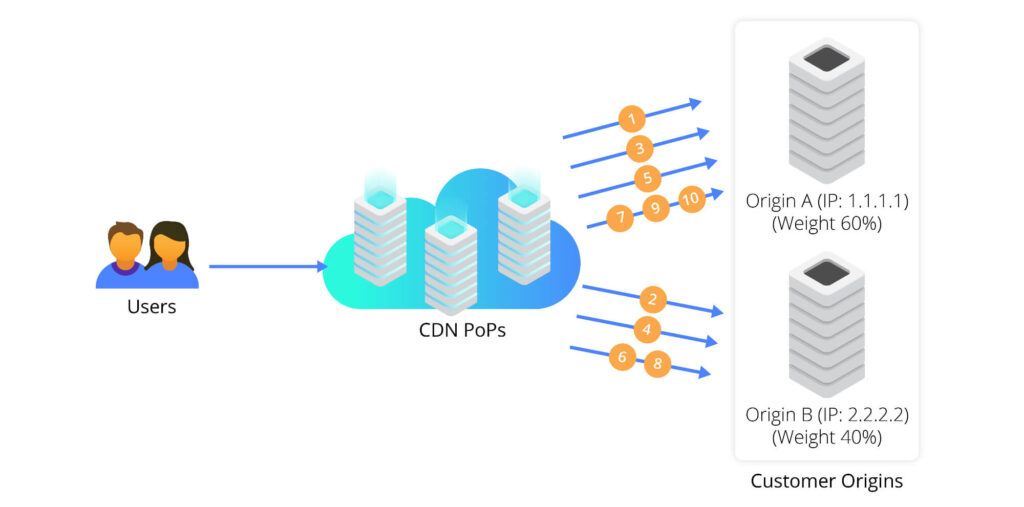

Weighted Round-Robin

Weighted Round Robin extends the basic Round Robin model by accounting for differences in server capacity. Each server is assigned a weight that reflects its relative processing power or available resources. Requests are distributed proportionally according to these weights, allowing higher-capacity servers to handle more traffic. Weighted Round-Robin is commonly used in multi-origin environments where backend infrastructure is heterogeneous, and workload distribution must align with hardware capabilities.

IP Hash

IP Hash routes requests based on a value derived from the client’s IP address. The resulting hash consistently maps each client to the same backend server. Consistent routing supports session persistence for applications that store temporary user data locally. IP Hash is commonly used when shared session storage is unavailable or when maintaining connection affinity is required for correct application behavior.

Least Connections

Least Connections directs new requests to the server, handling the fewest active connections at the time of arrival. Routing decisions reflect current workload rather than fixed distribution rules. Least Connections reduce the risk of overload during periods of high concurrency by prioritizing less busy servers. Least Connections perform well in environments where session lengths vary, and traffic volume fluctuates throughout the day.

Least Response Time

Least Response Time selects backend servers based on observed responsiveness and active request volume. Routing favors servers that can deliver faster responses rather than those with lower connection counts alone. Prioritizing responsiveness helps maintain consistent performance for latency-sensitive applications. Least Response Time adapts well to environments where backend performance shifts due to dynamic resource usage or shared infrastructure.

Load Balancing in CDN Context

In Content Delivery Networks (CDNs), load balancing serves as the core mechanism that ensures fast, reliable, and scalable content delivery at a global level. Unlike traditional server farms, CDNs operate across hundreds or thousands of geographically distributed PoPs, and traffic decisions must account for multiple factors at once.

To manage this complexity, CDN load balancing directs each request to the optimal location, ensuring low latency, preventing congestion, and maintaining high performance. Its key functions include:

Efficient Static Asset Delivery

Static content—such as images, stylesheets, and media files—represents the primary workload for most CDNs. Load balancing determines which PoP responds to each request by evaluating cache availability and proximity to the end user.

By directing traffic to the nearest PoP with valid cached content, load balancing reduces delivery distance and lowers latency. Distributing requests across multiple PoPs also prevents localized congestion during traffic surges, allowing CDNs to maintain consistent performance even under sudden demand spikes. In this role, load balancing directly supports efficient and scalable static asset delivery.

Global Traffic Coordination with GSLB

As CDN infrastructure expands across regions, traffic distribution decisions must move beyond regional awareness. Global Server Load Balancing introduces a coordination layer that evaluates the health, location, and reachability of PoPs and origin resources worldwide.

GSLB enables user requests to be routed to appropriate regions without unnecessary cross-region traversal. Such an approach improves origin resilience by reducing dependence on a single location while keeping traffic within optimal network paths. CDN providers like CDNetworks apply GSLB to support region-aware origin routing and automatic failover, helping maintain service availability during regional disruptions.

Protocol-Aware Traffic Routing

CDNs handle traffic across multiple transport protocols, each with distinct connection characteristics. Load balancing optimizes routing for each protocol rather than applying uniform handling:

- HTTP traffic: Load balancing reuses connections and manages sessions to reduce handshake overhead and improve throughput.

- HTTPS traffic: It handles secure connections efficiently, minimizing latency from encryption and SSL/TLS negotiation.

- QUIC traffic: Protocol-aware routing enables faster connection establishment and smoother handoffs in dynamic network conditions.

Together, these optimizations help maintain responsive application delivery across diverse environments.

Load Balancing FAQ

What Is Load Balancing?

Load balancing distributes network traffic across multiple servers to ensure no single resource becomes overloaded, maximizing system availability during high-demand periods.

What Is a Load Balancer?

A load balancer is a device or software controller that evaluates incoming requests in real-time and directs each one to the most suitable server based on availability and health.

What Is the Difference Between Static and Dynamic Load Balancing Algorithms?

Static algorithms distribute traffic based on fixed rules, while dynamic algorithms utilize real-time performance data to adjust routing decisions based on active connections and server latency.

What Is GSLB?

Global Server Load Balancing (GSLB) directs users to the nearest data center, reducing latency across multiple worldwide regions and providing automated failover during regional outages.

What Are the Key Benefits of CDNetworks’ Origin Load Balancing?

The Origin Load Balancing provided by CDNetworks delivers tangible benefits for businesses, including:

- Improved stability and uptime by distributing workloads across multiple origin servers

- Faster recovery from failures through proactive detection of server abnormalities and automatic failover

- Enhanced performance and lower latency with region-based origin fetching